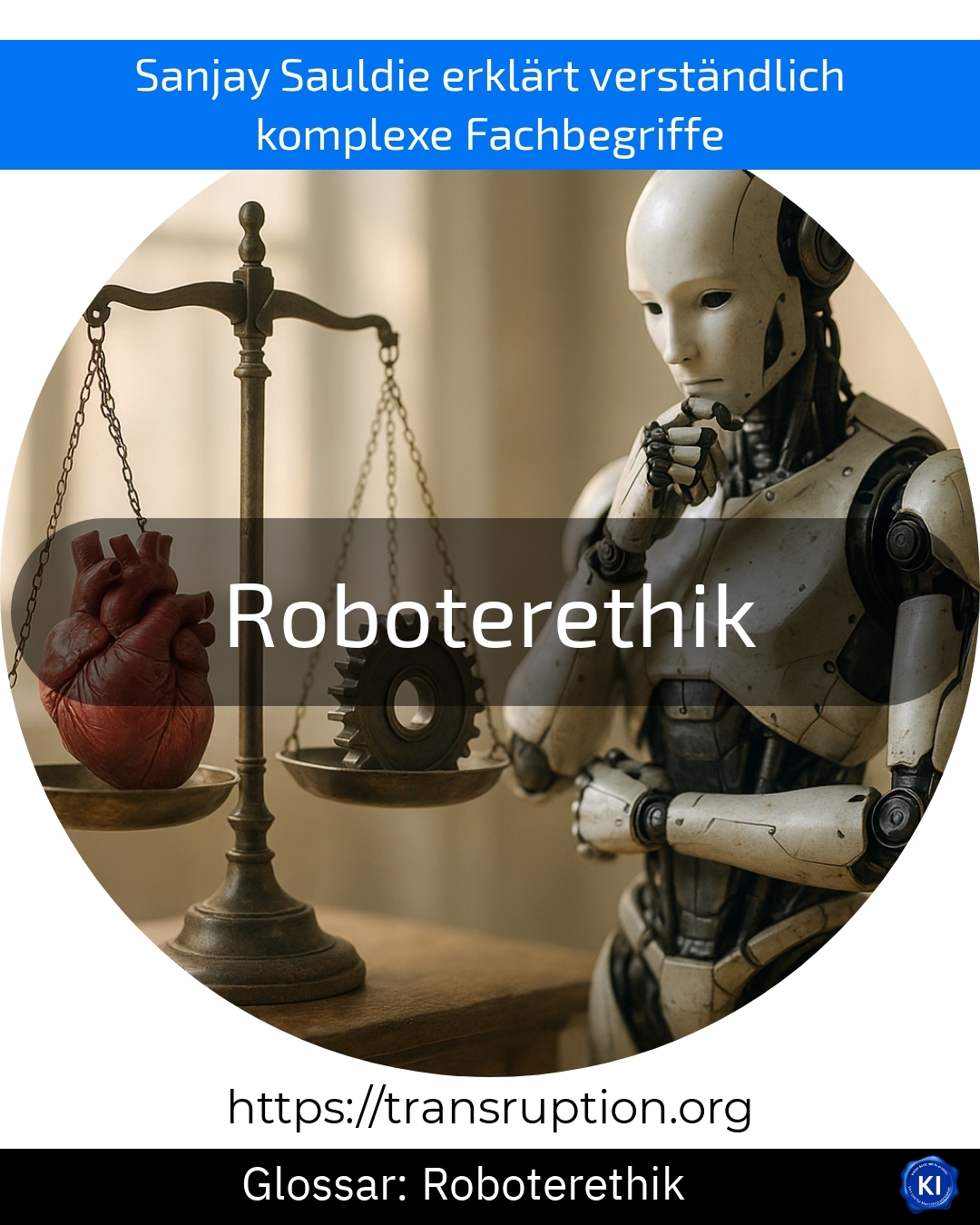

Robot ethics is particularly relevant in the fields of artificial intelligence, robotics, and digital society. The term describes the ethical principles and rules by which robots and artificial intelligence should act. The aim of robot ethics is to ensure that machines do not make decisions that harm or unfairly treat people.

An example: In a hospital, carer robots could support elderly people. Robot ethics ensures that these robots treat patients respectfully, respect their privacy, and react correctly in emergencies – for the benefit of people.

Robot ethics is becoming increasingly important as more and more tasks are being automated. Therefore, robot ethics also deals with questions such as: Is a robot allowed to monitor someone? Who is responsible if a robot makes a mistake? Companies and researchers are developing guidelines to ensure robots are used responsibly.

For decision-makers, robot ethics is a central point when it comes to introducing robots or AI systems in a company. It helps to build trust, minimise risks, and ensure the responsible handling of new technologies.